Yunsung Kim

Google Scholar / Email / GitHub / Twitter / LinkedIn I am a Postdoctoral Scholar at the Stanford Graduate School of Education sponsored by Prof. Candace Thille. I received a PhD in Computer Science from Stanford University in 2024, where I was advised by Prof. Chris Piech. My research aims to build computational tools that can help instructors understand and aid their students more efficiently and effectively. I often use ideas from probabilistic modeling, machine learning, and artificial intelligence. I was a Machine Learning Scientist Intern (Summer 2022) in the Learning Sciences Organization at Amazon, where I was supervised by Prof. Candace Thille. Before coming to Stanford, I finished my undergraduate studies at Columbia University, where I was fortunate to have worked with Prof. Augustin Chaintreau and Prof. Martha Kim. |

|

News |

| Apr 2026 | Two of my papers were accepted at ACM Learning@Scale 2026 |

| Apr 2026 | Two of my papers were accepted at ACL 2026 (1 Main, 1 Findings) |

| Apr 2026 | My project on the Learning Strategy Studio was generously awarded $75,000 by the HAI Seed Grant |

| Mar 2026 | My book chapter on Personalized Learning Experiences with AI was published in AI Applications in Online Higher Education Administration (Routledge). |

Teaching |

One of my greatest sources of inspiration for my research is my love for teaching! Here are the courses which I've been involved with:

- (Spring 2026) CS 407/Educ 407: Lytics Seminar (Instructor)

- (Summer 2023) CS109: Probability for Computer Scientists (Main Instructor)

- (Spring 2023) EDUC 259B: Education Data Science Seminar (Guest Lecture, 04/14/2023)

- (Spring 2022) CS109: Probability for Computer Scientists (Teaching Assistant)

- (Fall 2021) CS109: Probability for Computer Scientists (Teaching Assistant)

- (Summer 2021) CS109: Probability for Computer Scientists (Teaching Assistant)

- (Spring 2020) CS106A: Programming Methodologies (Teaching Assistant)

Recent Research |

2026 |

|

|

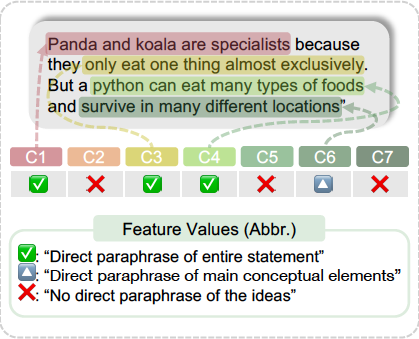

Interpretability from the Ground Up: Stakeholder-Centric Design of Automated Scoring in Educational AssessmentsYunsung Kim, Mike Hardy, Joseph Tey, Candace Thille, Chris Piech ACL Findings, Association for Computational Linguistics, 2026 paper / This paper argues for a shift in how automated scoring should be formulated and studied: from treating automated scoring as an isolated prediction task to situating it within the broader stakeholder-centered assessment lifecycle spanning assessment design, scoring, and iterative refinement. We present four foundational, stakeholder-centered principles of interpretable automated scoring and demonstrate its feasibility by achieving high scoring accuracy and alignment with human judgment. |

|

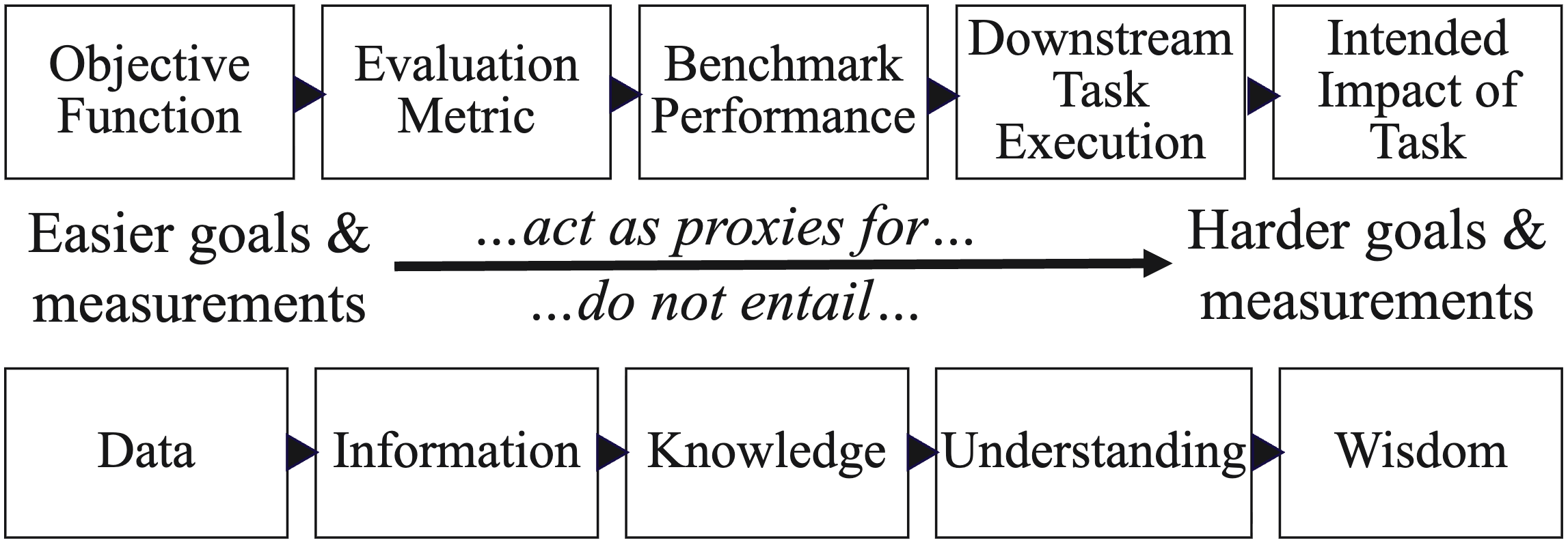

Knowledge without Wisdom: Measuring Misalignment between LLMs and Intended ImpactMike Hardy, Yunsung Kim ACL, Association for Computational Linguistics, 2026 paper / Large language models show impressive performance on benchmarks. But do these results align with downstream tasks and their actual intended impact? Focusing on the task of teaching and learning of schoolchildren, we show that LLMs are poorly aligned with downstream measures of teaching quality and are often negatively aligned with the intended impact of student learning outcomes. This work demonstrates methods for robustly measuring alignment of complex tasks when the measurement of intended impact is noisy. |

|

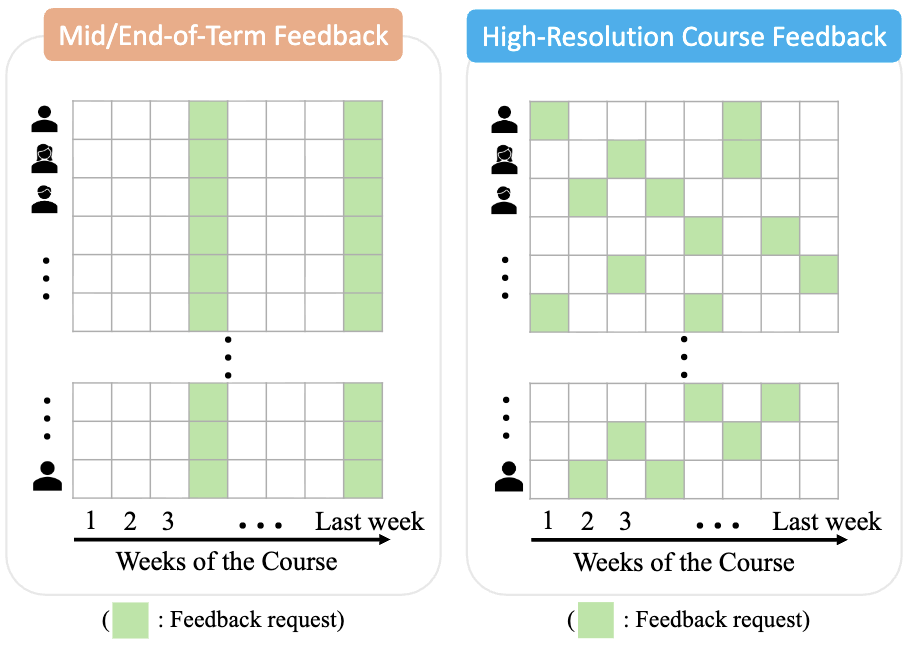

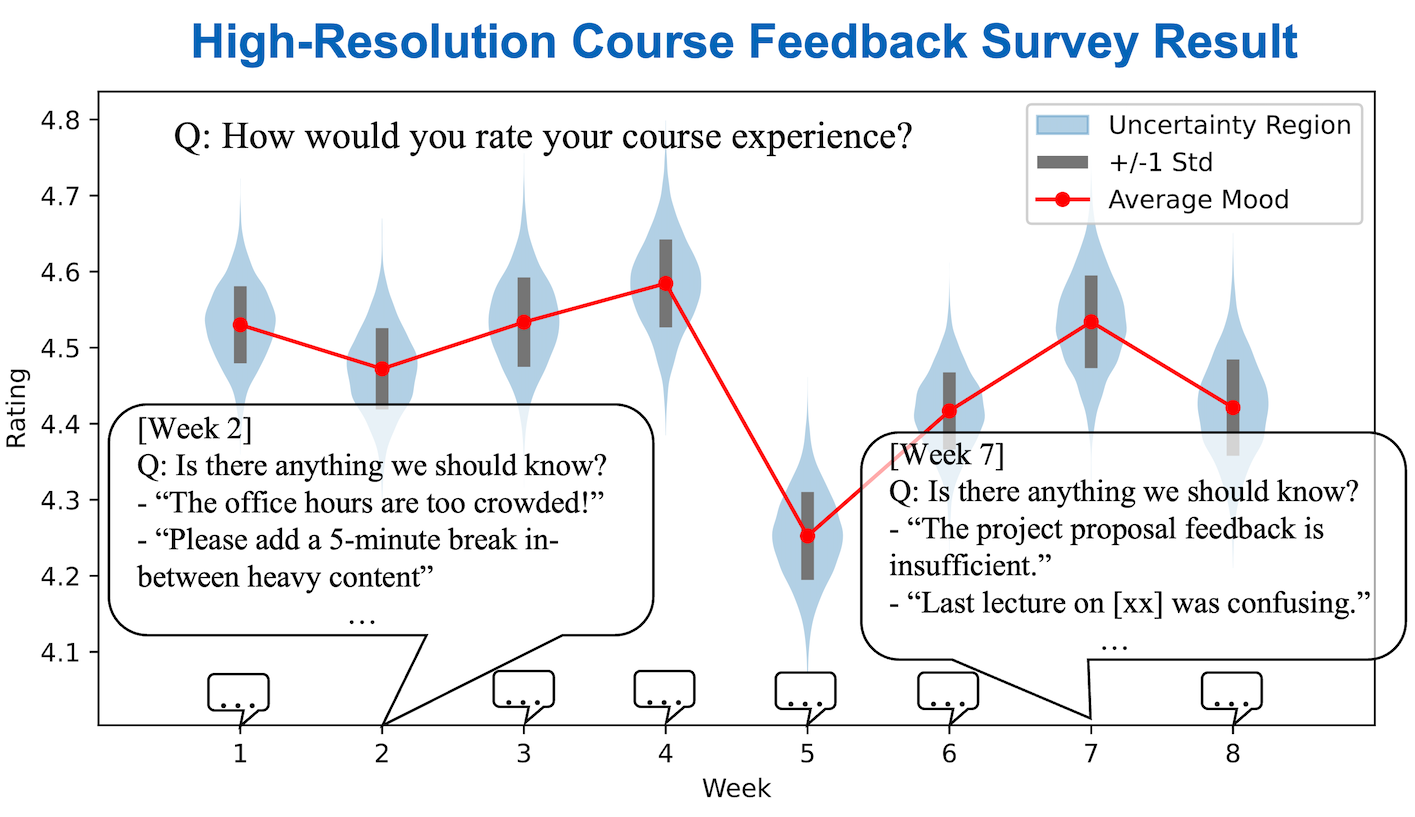

A Large-Scale Observational Study on Obtaining Lightweight, Randomized Weekly Student FeedbackYunsung kim, Hansol Lee, Candace Thille, Chris Piech L@S, ACM Conference on Learning @ Scale, 2026 paper / code / website / We show that High-Resolution Course Feedback (HRCF) can support measurable improvements in students’ perceived learning experience. Studying 103 HRCF course offerings of 42 computer science courses across 4 academic years, we find that each additional term of HRCF use is associated with modest but significant gains in learning-related evaluations for medium/low-enrollment courses (<250 students). |

|

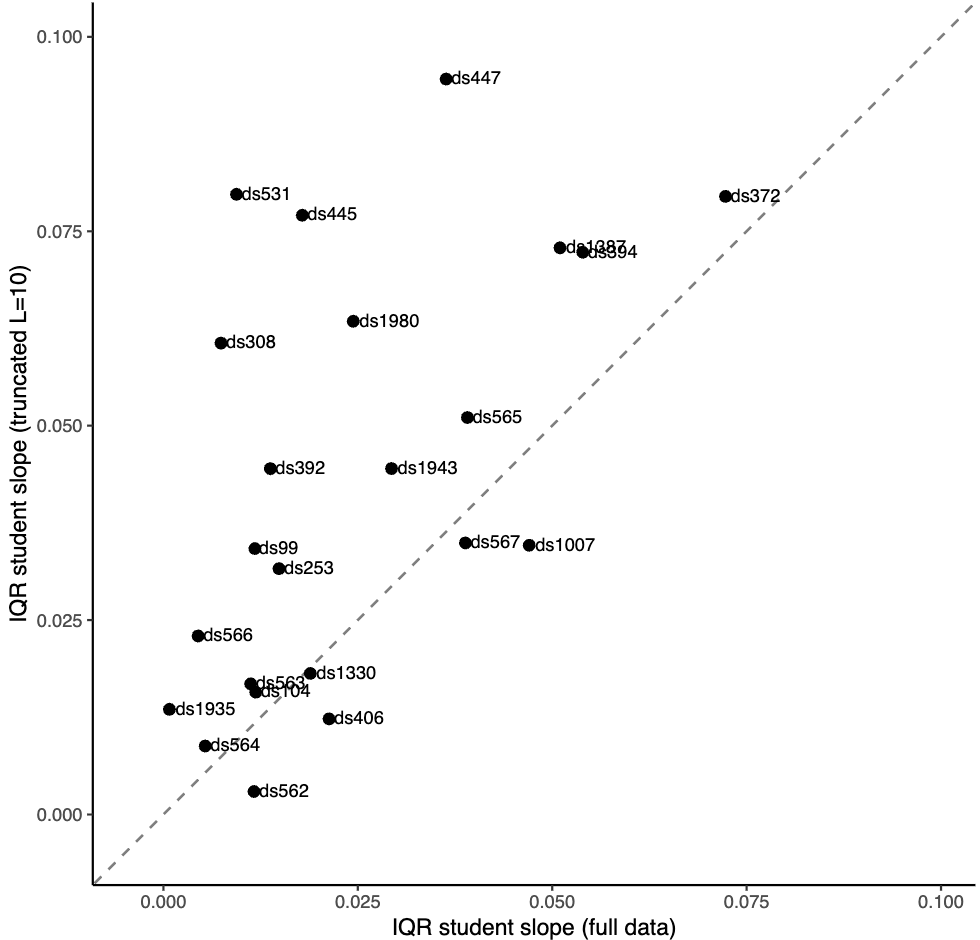

The "Astonishing Regularity" Revisited: Sensitivity of Learning-Rate Estimates to Practice-Sequence LengthHansol Lee, Guilherme Lichand, Cristina Barnard, Lucas Klotz, Candace Thille, Yunsung Kim, Benjamin W. Domingue L@S, ACM Conference on Learning @ Scale, 2026 paper / This paper revisits the claim that students learn at surprisingly similar rates by testing how sensitive learning-rate estimates are to the length of students’ observed practice sequences. Across 26 datasets, truncating learning sequences at 10 or 5 opportunities substantially inflates estimated learning-rate heterogeneity, indicating that practice-sequence length is an important and underreported source of uncertainty in mixed-effects learning models. |

2025 |

|

|

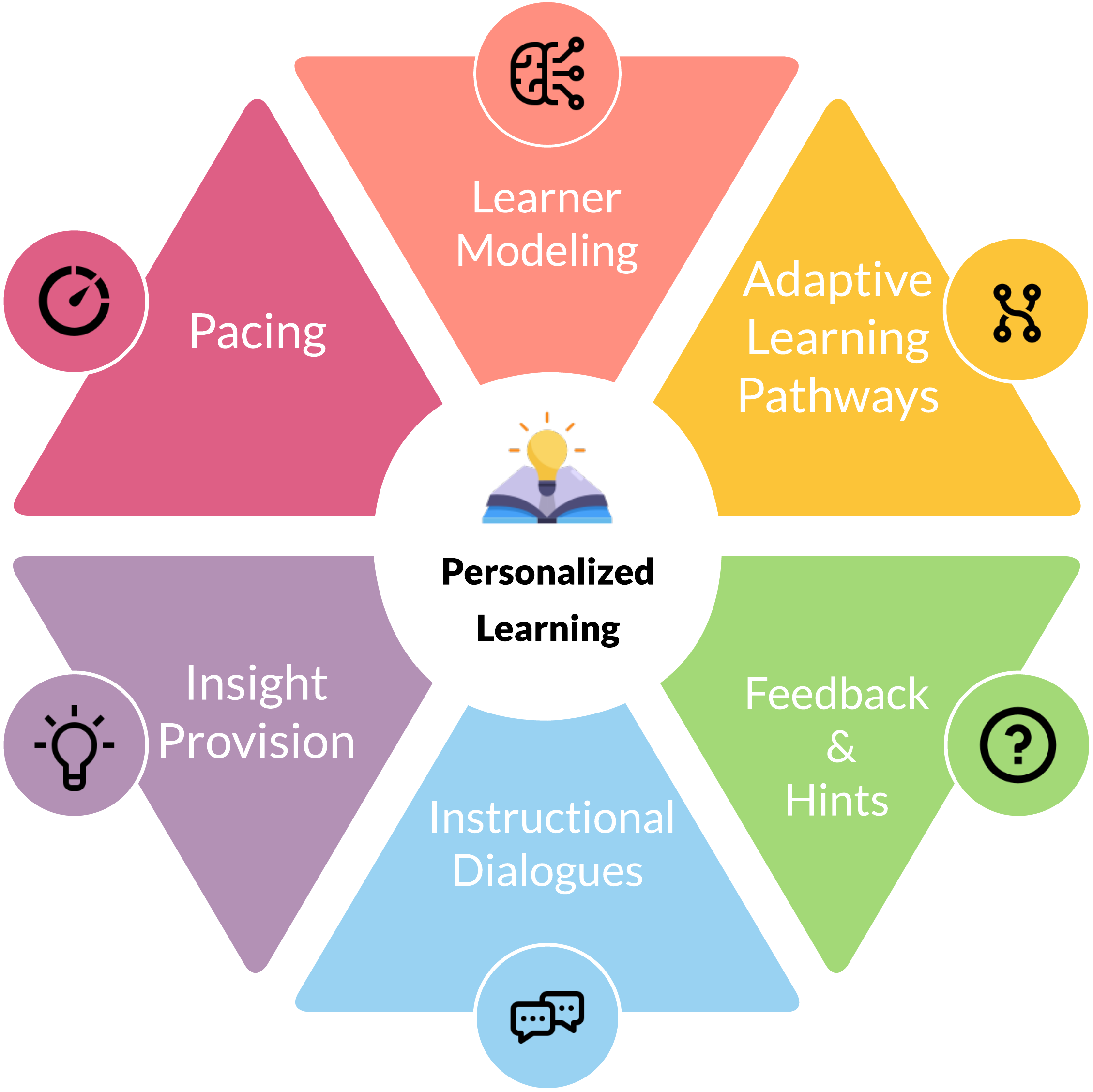

Personalized Learning Experiences with AI: An Overview for Decision-Makers and PractitionersYunsung Kim, Candace Thille Preprint, 2025 paper / This article provides an overview of technology-mediated personalized learning and the role of AI for higher education decision-makers, administrators, and practitioners. We review the historical foundations of AI and personalized learning, the key components of modern personalized learning systems, and the impact of emerging generative AI technologies. We conclude with a discussion of the role of higher education in shaping the future of AI-driven personalized learning. |

2024 |

|

|

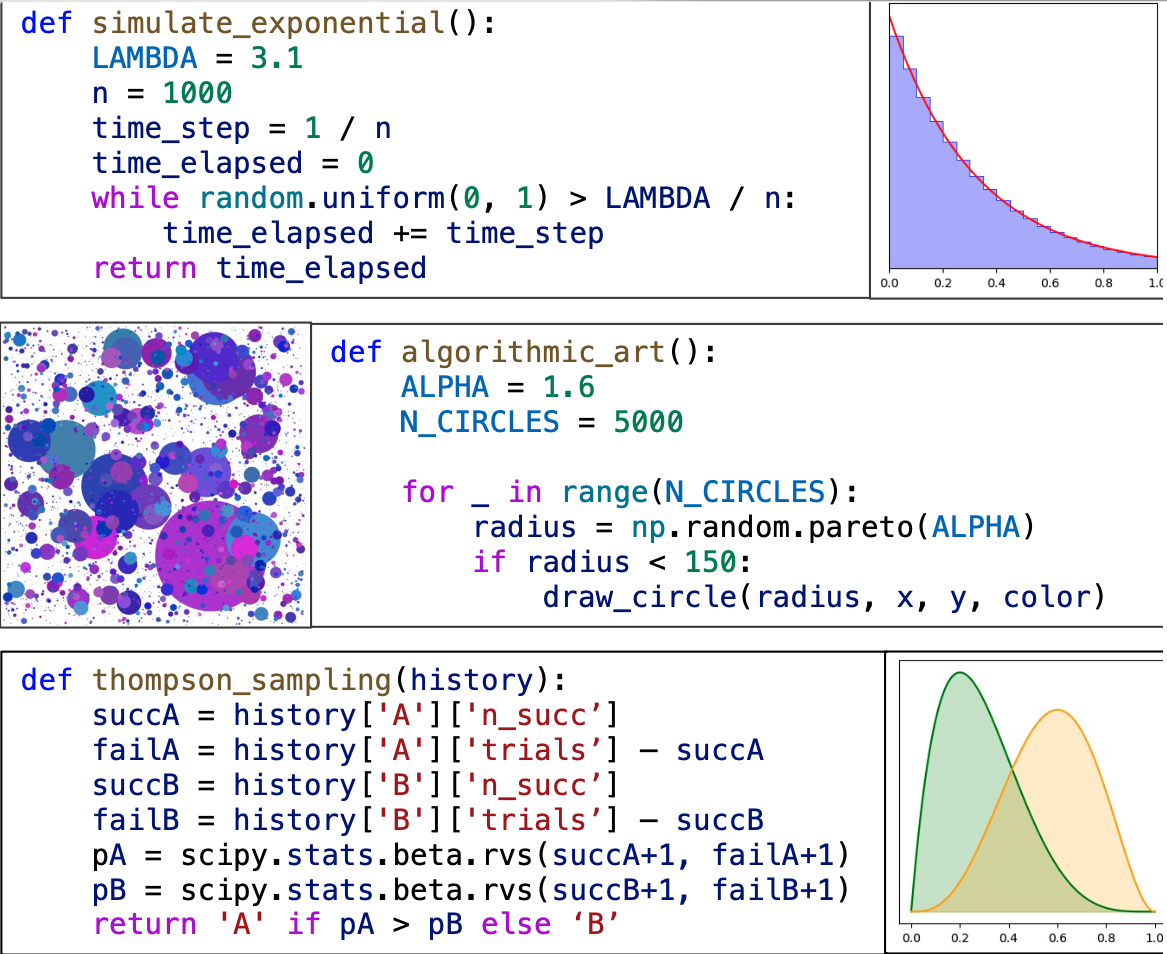

Grading and Clustering Student Programs That Produce Probabilistic OutputYunsung Kim*, Jadon Geathers* (Equal Contribution), Chris Piech EDM, International Conference on Educational Data Mining, 2024 paper / code / StochasticGrade is an open-source framework for auto-grading programs that produce probabilistic output. It offers an exponential speedup over the standard two-sample hypothesis testing baseline on identifying incorrect programs and allows the rate of misgrades to be adjusted. Moreover, the “disparity measurements” calculated by StochasticGrade can be used for fast and accurate clustering of student programs by error type. We demonstrate the accuracy and efficiency of StochasticGrade using student data collected from 4 assignments in an introductory programming course. |

2023 |

|

|

High-Resolution Course Feedback: Timely Feedback Mechanism for InstructorsYunsung kim, Chris Piech L@S, ACM Conference on Learning @ Scale, 2023 paper / code / website / High-Resolution Course Feedback (HRCF) is a tool for minimizing the delay between when an issue comes up in a course and when the instructors get feedback about it. It requests feedback from a small random subset of the students each week, but surveys each student exactly twice per term. We demonstrate that without extra effort compared to mid/end-of-term surveys, HRCF can provide constructive and actionable feedback early on. |

|

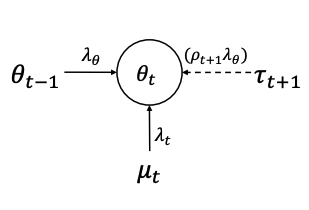

Variational Temporal IRT: Fast, Accurate, and Explainable Inference of Dynamic Learner ProficiencyYunsung Kim, Sree Sankaranarayanan, Chris Piech, Candace Thille EDM, International Conference on Educational Data Mining, 2023 paper / code / A new algorithm for inferring dynamic learner proficiency when learning occurs alongside assessment. While retaining high inference quality, VTIRT is 28 times faster than the fastest existing inference algorithm and provides interpretable inference by its modular algorithm. |

|

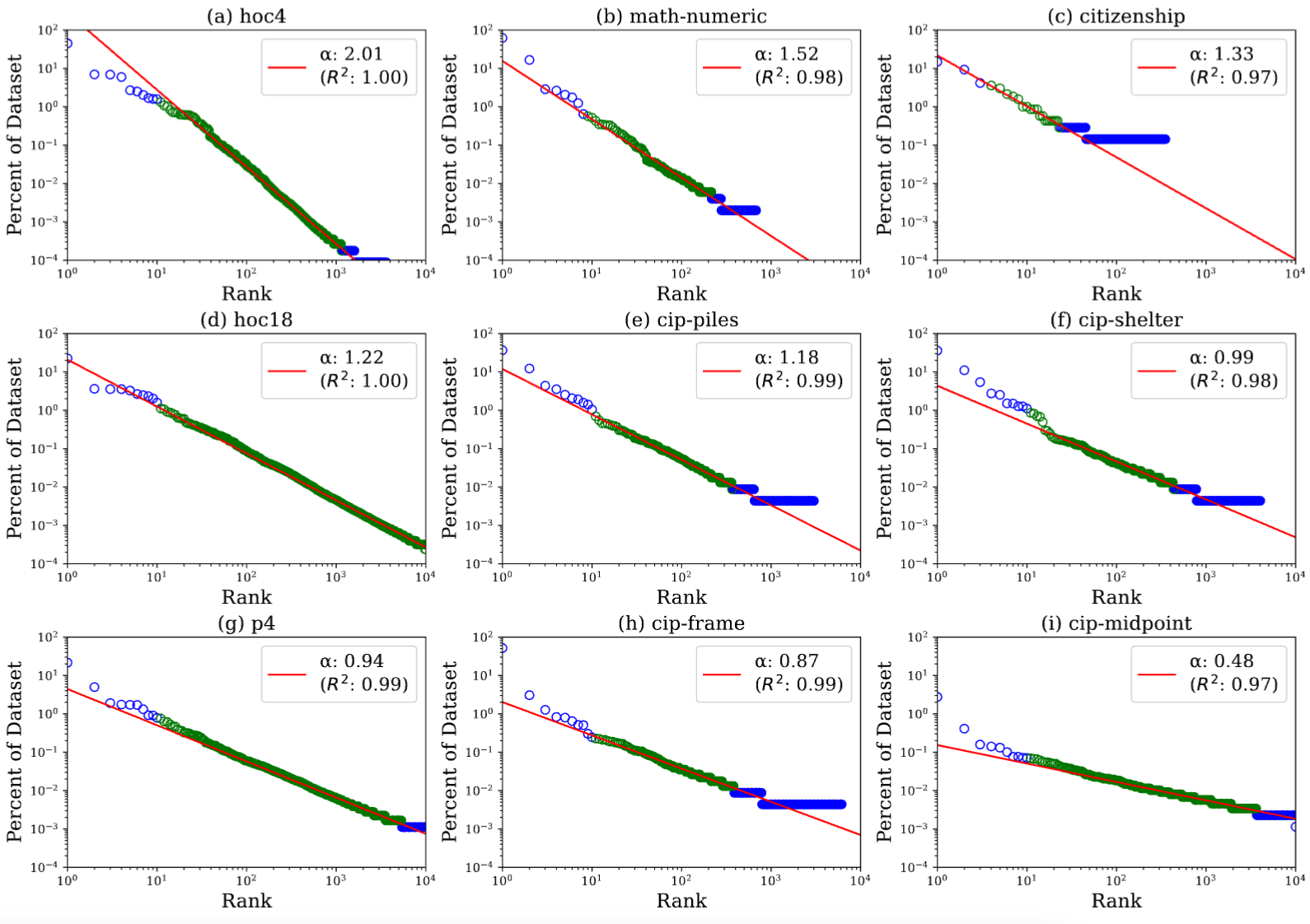

The Student Zipf Theory: Inferring Latent Structures in Open-Ended Student Work To Help EducatorsYunsung Kim, Chris Piech LAK, International Learning Analytics and Knowledge Conference, 2023 paper (NB: Some figures are not properly displayed in Chrome) / code / Are there structures underlying student work that are universal across every open-ended task? We demonstrate that, across many subjects and assignment types, the probability distribution underlying student-generated open-ended work is close to Zipf’s Law. We discuss how inferring this latent structure can help classrooms and develop an inference algorithm. |

|

Adapted from Leonid Keselman's fork of John Barron's website. |